Big Algo Updates Do Kill

On August 1st 2018, Google launched one of the most aggressive algorithmic changes we had seen as an SEO company since the notorious 2013 Google update, “Penguin”.

The August update came like any other Google update, no warnings and lots of speculation. Overnight, some of the most affected niches (finance and health) saw up to a 50% loss in traffic and rankings.

Many affiliate marketers who rely on highly competitive organic rankings on a national level had to scrap their websites and start all over again.

This is the 3rd major algorithm update I’ve been through with as an organic seo services company. One of the more frustrating things about this update was the fact that both white hat and black hat SEO’s saw their websites affected (or not affected) in the same ways. How frustrating!

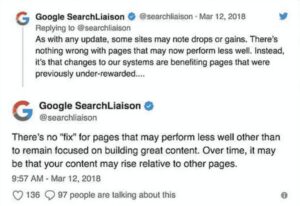

To make matters more complicated, Google announced and responded to webmasters with similar language to their infamous Penguin update.

In summary, they said that there’s really nothing webmasters can do about the update and If your site has been devalued, just try and do better next time. Which is to basically say, “You suck! You do better next time!”

And worse, they didn’t even spell “benefitting” correctly!

Plainly, SEO’s lost their shizzle.

Some Deeper Reading On SEO:

- What is SEO

- What does SEO Stand For

- What is Social SEO?

- The Ultimate Guide To Organic SEO

- The Top Local Search Ranking Factors

- Technical SEO and How it Affects Your Website’s Rank

- How Does SEO Work for Your Business?

- SEM vs. SEO: Tapping Your Business Potential

- White Hat SEO vs. Black Hat SEO: Are You Doing SEO Right?

- Easy Guide to Getting Local SEO to Work for Your Small Businesses

- Small Business SEO: Are You Optimizing the Right Way?

- The Importance of NLP and What It Means For SEO

- The On-page SEO Checklist

- Keyword Research Mastery: The Beginners Guide

- The Ultimate Google Algorithmic Penalty Recovery Guide

- Questions you SHOULD Ask Before Hiring an SEO Expert

- The 5 Step Beginner Guide to SEO Writing That Ranks

Who Were the Biggest Losers?

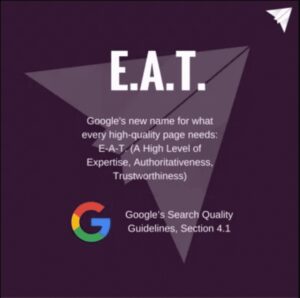

The broad core algorithm update definitely seemed to devalue some specific niches more than others. The data shows the highest affected sites are those within the category of Your Money or Your Life websites (YMYL) and how well their expertise, authority, and trustworthiness (EAT) were scored.

What is EAT?

Expertise, Authority, and Trustworthiness are the standards by which Google judges whether a website is worthy of being ranked for a topic.

Basically, high-quality pages get the rankings while low-quality pages don’t. Here are some of the factors that can affect your EAT:

- Low-quality content – not written by an expert.

- Duplicate content – copied from another expert’s website.

- Short content – length can also indicate to Google that an article is not exhaustive enough to solve the reader’s problem.

What is YMYL?

Your Money or Your Life are defined by Google as highly impactful life events centered on money. Here’s how Google breaks this down:

- Pages that focus on financial or shopping

- Sites that provide financial information that’s sensitive like taxes or investments

- Articles that provide information on medical conditions or diseases & how to assess and treat them properly.

- Legal themed pages that offer advice on a personal injury, family law, or other sensitive legal issues.

Pages that offer advice on home or car repair that could potentially be disastrous to the quality of life of a person if the incorrect information is given.

I’ve taken this graphic from Search Engine Journal, which effectively depicts the top niches affected.

This graphic shows the ranking changes before and during the Google update. The creator of this graphic, Mordy Oberstein from RankRanger thinks that this data shows movements on a scale never seen before from a previous Google update.

SEMrush Sensor was also off the charts with a ranking of 9.4 on the day of its change. Some of the top affected niches were health, finance, auto, and fitness niches.

What Can You Do?

Well, Google has one piece of advice…“Do better next time!”

Wouldn’t it be nice if massive world problems could be solved with advice like this?

I’d receive the parent of the year award if I gave this advice to my kids every time they failed, “Son, just do better next time”!

Nailed it!

Seriously, how do we take such nebulous advice to practical application? Well, let’s understand a little bit more about what Google wants for its users.

The Road to Recovery: Long Term + Quality and Relevance

If there’s one thing that Google continues to clarify in every update, it’s that quick SEO wins without a solid foundation of long-term quality & relevancy goals will not win in 2018 onward. Black hat tactics will always be around, but Google raises the bar of quality every year making black hat SEO more and more difficult.

On my whiteboard I have two quotes for the week:

“Success rides on the wings of consistent discipline.”

“Vision without execution is hallucination.” – Thomas Edison

A lot of SEO’s spend their time hallucinating about the results they wish they had as opposed to actually creating a strategic plan and executing that plan with consistent discipline.

The key to recovery is sticking to a methodology that produces quality and relevance to Google over a long term plan.

I call this methodology TRAP. I learned the concept of TRAP from an SEO buddy of mine named, Stephen Kang. He’s the founder of the popular Facebook group, SEO Signals Lab, and has done awesome things for the SEO community.

Here’s how the acronym of TRAP breaks down:

- Technical – Can Google crawl my website?

- Relevancy – Is it crystal clear what my website does to help searchers?

- Authority – Is my website backlink worthy and authoritative?

- Popularity – Is my website popular on social channels & PR?

Technical – Start with the Foundation

Technical is the first place we start when looking at a website. The goal is to remove any blockers inhibiting Google from crawling a website.

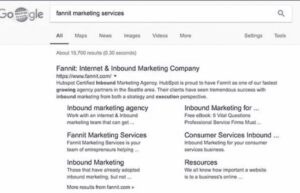

Check Devaluation

Devaluation is a signal that your website has some serious on-site technical issues. Here’s how you can determine if your site has been devalued with “site:” operator search.

When doing a Google search for your brand you should see your website show as #1. If this is not the case you’ve most likely been hit with an algorithmic penalty.

Using the site operator search, you can go a step further by searching “site:yourdomain.com” in Google. The first results should also be your home page.

Doing these searches helps you determine if you have a domain or page devaluation. Using a Google search of a paragraph of text within one of the pages of your website can also help you find a site devaluation. Simply copy a paragraph of text from your one of your pages.

Then, paste that content as a search in Google. You should see your website popup as the first results. If not, you may be dealing with a penalty.

Check HTTP/HTTPS Conflicts

We also want to be sure there’s no conflict between HTTP/HTTPS. This means that Google should only be indexing the HTTPS version of your website. To check your site use this command: site:domain.com -inurl:https.

Here’s a couple of plugins you can use with WordPress to to properly 301 redirect from http to https.

Crawlable Pages – Ensure Google Can Only Crawl What Matters

We want to be sure that unimportant pages are not crawled by Google. Think of this as having a library that’s always organized.

Keeping your content organized will ultimately improve your relevance by super niching your indexed content to the stuff that actually matters.

Here are some examples of content you don’t want to index:

- Categories (especially empty categories)

- Login pages

- Search result pages internally

- WordPress image pages

- Product review forms

- Checkout & cart pages

- Product comparison pages

- Session ID’s

- Header footer pages (on some WP front end editors)

You can find these pages by doing a site operator search as follows, “site:domain.com inurl:review.”

Crawl Errors

The next place we want to look at is a programmatic crawl of the website with SEMrush, Screaming Frog, Agency Analytics, and Search Console.

SEMrush is a paid service that provides an accurate view of technical crawl issues. We also use the paid version of Screaming Frog and Agency Analytics to verify the reported errors from SEMrush.

After compiling all of the data, we can set priority on what items to fix. Not all items are going to be fixed as we will manually check each item and qualify them as a problem/no problem.

Other crawl errors that we would check within Search Console include, 404’s, Structured Data, and the trend of Google daily crawl over the past 30 days. It’s important to check the structured data. Errors caused by plugins or improperly placed structured data may be happening which throws an error to Google affecting crawlability.

Page Speed

While running our programmatic check we’ll head over to GTmetrix and check out our site speed.

We want to be sure that our Google PageSpeed score is minimum 80+ with a page load time below 3 seconds. The ideal target is 90+ with a page load time of 2 seconds or less.

Faster websites promote easier indexing and improved user behavior which has an indirect effect on search engine rankings. If you believe your website has been devalued, fixing your website speed can have a drastic impact on your rankings.

This score is an A+:

Next, we want to check the quality of our content which is the relevancy step. I’ll be covering how you can quickly QC your site content to optimize your relevancy signals in part 2 of “How to Lift an Algorithmic Penalty Using TRAP.”

Relevancy – Use the Right Keywords

After doing comprehensive keyword research, you’ll need to ensure you’re avoiding thin and duplicate content.

Hummingbird and Panda can be your nemeses when it going up against an algorithmic devaluation. This is not easy to accomplish either. One of the biggest areas that has the most challenge is the eCommerce niche.

Thin Content

To determine if you have thin content you’ll want to use the tool Screaming Frog or Siteliner.com to crawl your entire website. I prefer Siteliner, but can be a tad expensive if you’re crawling more than 250 pages. Anything under 250 pages is free!

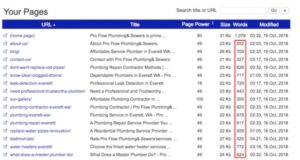

You’ll want to find all pages on your site (like these) that have less than 1000 words and do the following:

- Bulk up ALL content that’s less then 1000 words

- No index any non-important pages that don’t pertain to subject matter

- Use a canonical tag for similar product pages and bulk up the main product page (for eCommerce)

Here’s an example screenshot from Siteliner showing which pages we’d want to fix:

Now that we’ve identified thin content, we need to understand why that content is thin. It’s common for these crawlers to report a lot more words on a page because they are literally crawling the header, footer, sidebar, and paragraph sections of that page.

That’s probably somewhere around an extra 200-300 words of content per page, which means you’re probably going end up closer to 700-800 words of paragraph content on every page (not 1000).

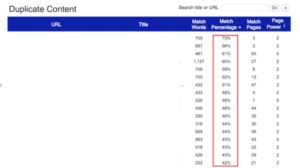

Duplicate Internal Content

The next thing we want to look at with Siteliner.com is the amount of internal duplicate content. This is a massive infraction that’s probably one of the most overlooked errors with on-page SEO.

The more common ways that duplicate content presents itself is through the copying of bullet points, paragraphs, about us sections, how to’s, and call to action paragraphs.

The key is to reduce the amount of thematic duplicate content throughout your site by ensuring each page is at least 90% unique from other pages on your site. If your site has more than overall 10% duplicate content, you’re going to need to do a deep dive.

Here are some action steps you can take:

- Run a Siteliner scan to find duplicate content pages

- Reduce page by page duplicate content to less than 10%

- Note similar thematic sections that you keep on duplicating across your pages and ensure your writers don’t add those sections in the future.

We can see from the image below that this example site has a pretty high level of duplicate content across other pages on their website.

We run an internal duplicate content audit every quarter for our clients to make sure we are finding and addressing any internal duplicate content issues quickly.

E-commerce Duplicate Content

For eCommerce, this can be a big area of frustration, as you’re trying to provide unique descriptions for products and there can be inevitable overlap.

I can’t stress how imperative it is that you consider your methodology for providing unique well-written content on your product pages.

One of the biggest offender platforms is Shopify. The tabbed product descriptions found in many themes can often encourage multiple duplicate content issues across all product pages of a website.

A good way to avoid duplicating your content when setting up your Shopify ecommerce store is to use special tools, like SaleSource – for more information click here.

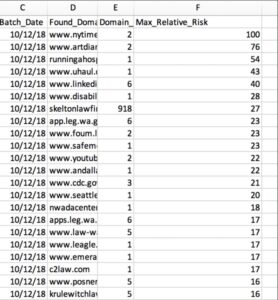

External Duplicate Content

For external duplicate content, we are going to use copyscape.com. You’ll want to use the batch analysis tool for this audit. Grab the summery URL’s that were crawled from Siteliner (download a .csv) and place those URL’s into the batch analysis tool.

This is a paid audit and can become quite expensive. If you’re looking to save a few bucks go ahead and whittle down the URL’s that you want to examine to only the ones that are key for SEO.

Once you’ve run the batch, you’re going to need to download your results as a .csv file and then sort them by the highest relative risk of duplicate content.

You should get a view like this:

Now, take this data and either rewrite your content to ensure it’s 90% unique or request that the webmaster who “borrowed” your content to take down their content.

Sometimes these pages/articles are doing nothing for you (badly written old articles). It might be best to simply take down the article and do a 301 redirect.

Internal Anchor Text Distribution

The final thing that we want to analyze for relevancy is your internal anchor text distribution. To keep from over-optimizing, we are just going to use the rule of 25, 25, 25, 25. 25% exact match/money, 25% random, 25% brand, and 25% related.

Use a tool like Screaming Frog to crawl every single link across your site. You’re going to want to map out how these links are currently supporting your primary pillar/cluster and then make adjustments based upon your anchor text distribution.

Track everything within a Google Sheet and constantly update/track your work as you add more content to the site to keep yourself within compliance.

Now that our relevancy signals are squeaky clean we are going to audit the authority of our backlinks. This will come in part three!

Authority – Are Your Backlinks Building (Or Destroying) Your Rankings?

Authority is the third step in T.R.A.P. and it involves taking a serious look at your site’s backlinks and asking a few important questions:

- Are your backlinks coming from quality sources?

- Is image hotlinking slowing down your site?

- How can you forge more backlinks?

Let’s learn how to answer each of these and see how you can leverage backlinks to help your website recover from a Google algorithmic penalty.

Where are your backlinks coming from?

The first thing you need to do is make sure that all the backlinks pointing to your site are coming from quality, well-trusted sources. If you have links coming from low-quality or spam sites, Google won’t wait long before hitting you with a ranking penalty.

To start, open Ahrefs and type your site into the search bar. Once your site information loads, look at the navigation sidebar on the left and click “Backlinks.”

At the top of the “Backlinks” window, click “All” to see all your site’s backlinks.

You will be presented with a long list of backlinks. Above the backlinks, you will see three options: “Live,” “Recent,” and “Historical.” Click “Historical.

Now click “DR” to sort the list by domain rating. You want the domain rating sorted from weakest to strongest.

Time to get dirty!

Go through your backlinks and disavow all the links from weak sites with a domain rating less than 5, as well as any backlinks coming from porn, gambling, or hacked sites. Also disavow a backlink if it is coming from a highly rated, but poorly visited site. Basically, disavow any backlink coming from a site you wouldn’t want your name associated with.

Don’t know how to disavow backlinks? Check out this article by Search Engine Journal to learn how!

If you accidentally disavow a link, don’t worry, you can un-disavow it and Google will return the link juice to you.

Is image hotlinking damaging your site’s rankings?

Image hotlinking is a negative SEO practice that can damage your SEO by slowing down your site’s speed and sending bad link juice to your site via backlinks.

What is hotlinking?

Hotlinking occurs when someone posts an image from your site onto their site via a direct link back to your site — instead of them downloading the image and uploading it onto their own server. This means that whenever a visitor views your image on the other site, the other site is stealing your bandwidth to display the image. The more people stealing your bandwidth, the slower your site will load.

The quickest way to find out if images on your site are being hotlinked is to type this query into the Google Images’ search bar:

inurl:yourdomain.com -site:yourdomain.com

Replace “yourdomain.com” with your site’s domain and any results that appear are hotlinks!

To prevent hotlinking from slowing down your site, you can download a plugin or edit your general .htaccess rules — learn how to edit .htaccess rules.

The only reason you shouldn’t prevent hotlinking is if your site uses a dedicated Content Delivery Network (CDN) for image processing.

If you use a CDN, image hotlinking can actually be a valuable way to increase your site’s backlinks. In this case, just make sure you conduct a hotlink audit to ensure that the backlinks coming from your hotlinking is sending you good SEO juice (i.e. not coming from porn or gambling sites and the like). Disavow all your weak hotlinks, just as you would with your regular backlinks.

Where can you find more backlinks?

Once you’ve finished your backlink audit and disavowed all the weak backlinks, the next step is to forge some better backlinks for your site.

Here are three quick ways to increase your backlink count:

Steal your competitors’ backlinks

The goal in this stage is to make sure that your site has backlinks from all the same sites as your competitors.

First, open Ahrefs and type your company’s site into the search bar.

Once the overview loads, click “Organic search” and then scroll down until you see “Top 10 competitors” in the right-hand side bar.

Open the drop-down menu next to a few of your competitors and click “Backlinks.” Once their backlinks load, enable the “Dofollow” filter.

You should now have a long list of backlinks that are pointing to your competitor’s site.

Scroll through this list and ask yourself:

“Since these brands are linking to my competitor, what can I do to get them to point to me too?”

For example, if your competitor is mentioned in a website’s list of resources, contact that site and see if they would be willing to mention you too!

Or, if your competition has written a guest post for another site, contact the site and write your own guest post for them.

Make sure the internet knows about you

The goal here is to get ahead of your competition by accumulating quick, easy, and free backlinks from reputable directories and similar sites.

Your business site deserves to be featured in online business directories, so if you haven’t done so already, go through the internet’s top directories and make a profile! Not only will this serve as free advertising for your business, it will also provide valuable backlinks to your site.

Here are some of my favorite directories to get you started:

- Better Business Bureau

- Yellow Pages

- Yelp

- MapQuest

- TripAdvisor

- And, of course, Facebook

Once you’ve posted backlinks on all the big directories, niche down and post listings in directories for your specific niche. For example, Yardbook (if you’re a landscaper), or Enjuris (if you are a personal injury attorney).

You should also reach out to web 2.1 and profile sites to see if they have resource pages or business directories where you can post a backlink.

Reach out to industry sites for backlinks

Lastly, this stage is all about getting ahead of your competitors by forging powerful backlinks on high-quality sites.

Use Google to find highly-rated sites that speak to your niche, or are considered authority sites, and ask them if you can submit a guest post with a backlink to your site.

Write the guest post, include a backlink, and tada! Profit. Or at the very least, higher rankings.

Final Backlink Tips

Keep these in mind during your link building:

- Don’t pursue backlinks from sites you already have backlinks from.

- Don’t build backlinks from low-rated or spammy sites.

- Remember to mark your links as “do-follow,” not “no-follow.”

- For the most part, your anchor text should always be brand-related and not composed of money keywords. Only use money keywords as anchor text when the backlink is from a high-authority site.

Popularity – Is Your Website Click-Worthy?

The final step in T.R.A.P., Popularity brings all the previous steps together by making sure your site is optimized for your human audience. Let’s learn how to recover from an algorithmic penalty by improving your site’s popularity!

Drive clicks with onsite SEO

Google won’t award you with high rankings unless you have the organic traffic to warrant it. After all, if lots of people are consistently visiting your site, it must mean you are offering them quality content and meeting their needs.

Here are some easy SEO tips to help you improve your organic traffic.

Create a content index

A content index is a spreadsheet that lists every piece of content on your site. Creating a content index will help you define the silo structure of your site and will clarify the keyword themes that you are going after. This is important because understanding your keyword themes is crucial in the next tip.

Conduct title and H1 tags audit

Go through and edit all your post and page titles to ensure that they are SEO optimized. Here are some things to keep in mind during your audit:

- Keep your titles under 60 characters.

- Include your target keyword.

- Place keyword as far to the left as possible.

- Use action words to entice clicks. Words like “fast,” “ultimate,” “simple,” “easy,” and “definitive.”

- Craft titles that communicate health, wealth, and happiness as quickly as possible.

- Make sure your title accurately represents what the content of your post or page is.

Next, dive into each piece of content and make sure your H1 tags are also optimized. Often, H1 tags will simply repeat the title. This is fine, just follow the above tips and remember that while a title drives clicks, an H1 should drive people to scroll down the page.

Optimize your page and post descriptions

Now open each page and post and make sure your descriptions are optimized for emotion. You want to compel human readers to visit your site — so even if you’re ranked 3rd or 4th, people will still click your site if your description makes them feel something. The more clicks you have, the more popular Google thinks you are and the higher they will rank you!

One tip: when you’re writing your description, instead of ending it with a period, end it in a suspension point.

For example: instead of saying, “Reviews say we have the best pasta!” write, “Reviews say we have the best…”

End your descriptions in a cliffhanger will tempt a lot more clicks.

Can your content be scanned quickly?

43% of readers skim through blog posts, and of the ones who read, only 20% actually finish the entire post.

What does this mean for your site?

It is crucial that your content is optimized for skimming or your visitors will be immediately leaving your site — hurting your rankings.

Tips for improving scannability:

- Use subheadings. Don’t stop after your H1 tag — put your H2 and H3 tags to work as well.

- Use bullet points.

- Use numbered lists.

- Write short paragraphs.

- Keep your language informal and friendly.

- Place a clickable table of contents at the beginning of long-form content.

Improve your rankings by encouraging social shares

Spikes in your site’s traffic can garner higher rankings. Two great ways to manufacture traffic spikes are:

Release online press releases

Publishing press releases can lead to receiving a lot of quick backlinks — communicating a popularity signal to Google. Learn how to write a press release here and find some great places to publish your press release here.

Some tips to keep in mind when creating press releases:

- Don’t put out more than 1 press release per month.

- Don’t use a money keyword as your anchor text — use your brand name or your naked URL.

- Once your press release goes live, share it on as many social networks as possible to get those clicks started!

Foster relationships with industry influencers and ask them to share your content

This is called “influencer PR” and, if done right, it can generate a massive traffic spike for your site.

What is an influencer?

An industry influencer is someone who is considered an expert in your niche. An individual who people look to for advice, predictions, and insight. You can usually identify them because they are known by name in your niche, have large social followings, and do live events, book releases, or have a heavily-read blog.

One of the best ways to get an industry influencer to share your content and backlink to your site is to:

- Write a piece of content that features their tools or advice.

- Share your content with them in an email and highlight how you featured their tool or advice.

- Hope that they share your content on their social network.

If an influencer shares your content, leverage this opportunity by releasing a press release about it. Link to both the shared content and your own site in the press release.

A word of caution

Popularity jumps don’t make for long-standing rankings.

If your site doesn’t have a foundation of clean SEO, then a popularity boost might push up your rankings for a couple months, but you’ll inevitably crash back to where you were before.

In order to capitalize on popularity spikes, invest in your technical SEO right now so that you are able to hold onto your high rankings should an influencer suddenly send thousands of new visits your way.